glasses.models package¶

Subpackages¶

Submodules¶

glasses.models.AutoModel module¶

- class glasses.models.AutoModel.AutoModel[source]¶

Bases:

objectThis class returns a model based on its name.

Examples

>>> AutoModel.models() # odict_keys(['resnet18', 'resnet26', .... ]) >>> AutoModel.from_name('resnet18') >>> AutoModel.from_name('resnet18', activation=nn.SELU) >>> AutoModel.from_pretrained('resnet18')

- Raises

KeyError – Raised if the name of the model is not found.

- static from_name(name: str, *args, **kwargs) torch.nn.modules.module.Module[source]¶

Instantiates one of the model classes of the library.

Examples

>>> AutoModel.models() # dict_keys(['resnet18', 'resnet26', .... ]) >>> AutoModel.from_name('resnet18') >>> AutoModel.from_name('resnet18', activation=nn.SELU)

- Parameters

name (str) – Name of the model, e.g. ‘resnet18’

- Raises

KeyError – Raised if the name of the model is not found.

- Returns

A fully instantiated model

- Return type

nn.Module

- static from_pretrained(name: str, *args, storage: glasses.utils.weights.storage.Storage.Storage = HuggingFaceStorage(ORGANIZATION='glasses', root=PosixPath('/tmp')), **kwargs) torch.nn.modules.module.Module[source]¶

Instantiates one of the pretrained model classes of the library.

Examples

>>> AutoModel.pretrained_models() # odict_keys(['resnet18', 'resnet26', .... ]) >>> AutoModel.from_pretrained('resnet18')

- Parameters

name (str) – Name of the model, e.g. ‘resnet18’

- Raises

KeyError – Raised if the name of the model is not found.

- Returns

A fully instantiated pretrained model

- Return type

nn.Module

- static models() List[str][source]¶

List the available models name

- Returns

[description]

- Return type

List[str]

- static models_table(storage: Optional[glasses.utils.weights.storage.Storage.Storage] = HuggingFaceStorage(ORGANIZATION='glasses', root=PosixPath('/tmp'))) rich.table.Table[source]¶

Show a nice formated table with all the models available

- Parameters

storage (Storage, optional) – The storage from which get the pretrained weights. Defaults to HuggingFaceStorage().

- Returns

[description]

- Return type

Table

- static pretrained_models(storage: Optional[glasses.utils.weights.storage.Storage.Storage] = HuggingFaceStorage(ORGANIZATION='glasses', root=PosixPath('/tmp'))) List[str][source]¶

List the available pretrained models name

- Parameters

storage (Storage, optional) – The storage from which get the pretrained weights. Defaults to HuggingFaceStorage().

- Returns

[description]

- Return type

List[str]

- zoo = {'deit_base_patch16_224': <bound method DeiT.deit_base_patch16_224 of <class 'glasses.models.classification.deit.DeiT'>>, 'deit_base_patch16_384': <bound method DeiT.deit_base_patch16_384 of <class 'glasses.models.classification.deit.DeiT'>>, 'deit_small_patch16_224': <bound method DeiT.deit_small_patch16_224 of <class 'glasses.models.classification.deit.DeiT'>>, 'deit_tiny_patch16_224': <bound method DeiT.deit_tiny_patch16_224 of <class 'glasses.models.classification.deit.DeiT'>>, 'densenet121': <bound method DenseNet.densenet121 of <class 'glasses.models.classification.densenet.DenseNet'>>, 'densenet161': <bound method DenseNet.densenet161 of <class 'glasses.models.classification.densenet.DenseNet'>>, 'densenet169': <bound method DenseNet.densenet169 of <class 'glasses.models.classification.densenet.DenseNet'>>, 'densenet201': <bound method DenseNet.densenet201 of <class 'glasses.models.classification.densenet.DenseNet'>>, 'eca_resnet101d': functools.partial(<bound method ResNet.resnet101 of <class 'glasses.models.classification.resnet.ResNet'>>, stem=functools.partial(<class 'glasses.models.classification.resnet.ResNetStem3x3'>, widths=[32, 32]), block=<glasses.nn.att.utils.WithAtt object>), 'eca_resnet101t': functools.partial(<bound method ResNet.resnet101 of <class 'glasses.models.classification.resnet.ResNet'>>, stem=functools.partial(<class 'glasses.models.classification.resnet.ResNetStem3x3'>, widths=[24, 32]), block=<glasses.nn.att.utils.WithAtt object>), 'eca_resnet18d': functools.partial(<bound method ResNet.resnet26 of <class 'glasses.models.classification.resnet.ResNet'>>, stem=functools.partial(<class 'glasses.models.classification.resnet.ResNetStem3x3'>, widths=[32, 32]), block=<glasses.nn.att.utils.WithAtt object>), 'eca_resnet18t': functools.partial(<bound method ResNet.resnet18 of <class 'glasses.models.classification.resnet.ResNet'>>, stem=functools.partial(<class 'glasses.models.classification.resnet.ResNetStem3x3'>, widths=[24, 32]), block=<glasses.nn.att.utils.WithAtt object>), 'eca_resnet26d': functools.partial(<bound method ResNet.resnet26 of <class 'glasses.models.classification.resnet.ResNet'>>, stem=functools.partial(<class 'glasses.models.classification.resnet.ResNetStem3x3'>, widths=[32, 32]), block=<glasses.nn.att.utils.WithAtt object>), 'eca_resnet26t': functools.partial(<bound method ResNet.resnet26 of <class 'glasses.models.classification.resnet.ResNet'>>, stem=functools.partial(<class 'glasses.models.classification.resnet.ResNetStem3x3'>, widths=[24, 32]), block=<glasses.nn.att.utils.WithAtt object>), 'eca_resnet50d': functools.partial(<bound method ResNet.resnet50 of <class 'glasses.models.classification.resnet.ResNet'>>, stem=functools.partial(<class 'glasses.models.classification.resnet.ResNetStem3x3'>, widths=[32, 32]), block=<glasses.nn.att.utils.WithAtt object>), 'eca_resnet50t': functools.partial(<bound method ResNet.resnet50 of <class 'glasses.models.classification.resnet.ResNet'>>, stem=functools.partial(<class 'glasses.models.classification.resnet.ResNetStem3x3'>, widths=[24, 32]), block=<glasses.nn.att.utils.WithAtt object>), 'efficientnet_b0': <bound method EfficientNet.efficientnet_b0 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_b1': <bound method EfficientNet.efficientnet_b1 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_b2': <bound method EfficientNet.efficientnet_b2 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_b3': <bound method EfficientNet.efficientnet_b3 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_b4': <bound method EfficientNet.efficientnet_b4 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_b5': <bound method EfficientNet.efficientnet_b5 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_b6': <bound method EfficientNet.efficientnet_b6 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_b7': <bound method EfficientNet.efficientnet_b7 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_b8': <bound method EfficientNet.efficientnet_b8 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_l2': <bound method EfficientNet.efficientnet_l2 of <class 'glasses.models.classification.efficientnet.EfficientNet'>>, 'efficientnet_lite0': <bound method EfficientNetLite.efficientnet_lite0 of <class 'glasses.models.classification.efficientnet.EfficientNetLite'>>, 'efficientnet_lite1': <bound method EfficientNetLite.efficientnet_lite1 of <class 'glasses.models.classification.efficientnet.EfficientNetLite'>>, 'efficientnet_lite2': <bound method EfficientNetLite.efficientnet_lite2 of <class 'glasses.models.classification.efficientnet.EfficientNetLite'>>, 'efficientnet_lite3': <bound method EfficientNetLite.efficientnet_lite3 of <class 'glasses.models.classification.efficientnet.EfficientNetLite'>>, 'efficientnet_lite4': <bound method EfficientNetLite.efficientnet_lite4 of <class 'glasses.models.classification.efficientnet.EfficientNetLite'>>, 'fishnet150': <bound method FishNet.fishnet150 of <class 'glasses.models.classification.fishnet.FishNet'>>, 'fishnet99': <bound method FishNet.fishnet99 of <class 'glasses.models.classification.fishnet.FishNet'>>, 'mobilenet_v2': <bound method MobileNet.mobilenet_v2 of <class 'glasses.models.classification.mobilenet.MobileNet'>>, 'regnetx_002': <bound method RegNet.regnetx_002 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnetx_004': <bound method RegNet.regnetx_004 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnetx_006': <bound method RegNet.regnetx_006 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnetx_008': <bound method RegNet.regnetx_008 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnetx_016': <bound method RegNet.regnetx_016 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnetx_032': <bound method RegNet.regnetx_032 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnetx_040': <bound method RegNet.regnetx_040 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnetx_064': <bound method RegNet.regnetx_064 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnetx_080': <bound method RegNet.regnetx_080 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnety_002': <bound method RegNet.regnety_002 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnety_004': <bound method RegNet.regnety_004 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnety_006': <bound method RegNet.regnety_006 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnety_008': <bound method RegNet.regnety_008 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnety_016': <bound method RegNet.regnety_016 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnety_032': <bound method RegNet.regnety_032 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnety_040': <bound method RegNet.regnety_040 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnety_064': <bound method RegNet.regnety_064 of <class 'glasses.models.classification.regnet.RegNet'>>, 'regnety_080': <bound method RegNet.regnety_080 of <class 'glasses.models.classification.regnet.RegNet'>>, 'resnest101e': <bound method ResNeSt.resnest101e of <class 'glasses.models.classification.resnest.ResNeSt'>>, 'resnest14d': <bound method ResNeSt.resnest14d of <class 'glasses.models.classification.resnest.ResNeSt'>>, 'resnest200e': <bound method ResNeSt.resnest200e of <class 'glasses.models.classification.resnest.ResNeSt'>>, 'resnest269e': <bound method ResNeSt.resnest269e of <class 'glasses.models.classification.resnest.ResNeSt'>>, 'resnest26d': <bound method ResNeSt.resnest26d of <class 'glasses.models.classification.resnest.ResNeSt'>>, 'resnest50d': <bound method ResNeSt.resnest50d of <class 'glasses.models.classification.resnest.ResNeSt'>>, 'resnest50d_1s4x24d': <bound method ResNeSt.resnest50d_1s4x24d of <class 'glasses.models.classification.resnest.ResNeSt'>>, 'resnest50d_4s2x40d': <bound method ResNeSt.resnest50d_4s2x40d of <class 'glasses.models.classification.resnest.ResNeSt'>>, 'resnet101': <bound method ResNet.resnet101 of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnet152': <bound method ResNet.resnet152 of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnet18': <bound method ResNet.resnet18 of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnet200': <bound method ResNet.resnet200 of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnet26': <bound method ResNet.resnet26 of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnet26d': <bound method ResNet.resnet26d of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnet34': <bound method ResNet.resnet34 of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnet34d': <bound method ResNet.resnet34d of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnet50': <bound method ResNet.resnet50 of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnet50d': <bound method ResNet.resnet50d of <class 'glasses.models.classification.resnet.ResNet'>>, 'resnext101_32x16d': <bound method ResNetXt.resnext101_32x16d of <class 'glasses.models.classification.resnetxt.ResNetXt'>>, 'resnext101_32x32d': <bound method ResNetXt.resnext101_32x32d of <class 'glasses.models.classification.resnetxt.ResNetXt'>>, 'resnext101_32x48d': <bound method ResNetXt.resnext101_32x48d of <class 'glasses.models.classification.resnetxt.ResNetXt'>>, 'resnext101_32x8d': <bound method ResNetXt.resnext101_32x8d of <class 'glasses.models.classification.resnetxt.ResNetXt'>>, 'resnext50_32x4d': <bound method ResNetXt.resnext50_32x4d of <class 'glasses.models.classification.resnetxt.ResNetXt'>>, 'se_resnet101': <bound method SEResNet.se_resnet101 of <class 'glasses.models.classification.senet.SEResNet'>>, 'se_resnet152': <bound method SEResNet.se_resnet152 of <class 'glasses.models.classification.senet.SEResNet'>>, 'se_resnet18': <bound method SEResNet.se_resnet18 of <class 'glasses.models.classification.senet.SEResNet'>>, 'se_resnet34': <bound method SEResNet.se_resnet34 of <class 'glasses.models.classification.senet.SEResNet'>>, 'se_resnet50': <bound method SEResNet.se_resnet50 of <class 'glasses.models.classification.senet.SEResNet'>>, 'unet': <class 'glasses.models.segmentation.unet.UNet'>, 'vgg11': <bound method VGG.vgg11 of <class 'glasses.models.classification.vgg.VGG'>>, 'vgg11_bn': <bound method VGG.vgg11_bn of <class 'glasses.models.classification.vgg.VGG'>>, 'vgg13': <bound method VGG.vgg13 of <class 'glasses.models.classification.vgg.VGG'>>, 'vgg13_bn': <bound method VGG.vgg13_bn of <class 'glasses.models.classification.vgg.VGG'>>, 'vgg16': <bound method VGG.vgg16 of <class 'glasses.models.classification.vgg.VGG'>>, 'vgg16_bn': <bound method VGG.vgg16_bn of <class 'glasses.models.classification.vgg.VGG'>>, 'vgg19': <bound method VGG.vgg19 of <class 'glasses.models.classification.vgg.VGG'>>, 'vgg19_bn': <bound method VGG.vgg19_bn of <class 'glasses.models.classification.vgg.VGG'>>, 'vit_base_patch16_224': <bound method ViT.vit_base_patch16_224 of <class 'glasses.models.classification.vit.ViT'>>, 'vit_base_patch16_384': <bound method ViT.vit_base_patch16_384 of <class 'glasses.models.classification.vit.ViT'>>, 'vit_base_patch32_384': <bound method ViT.vit_base_patch32_384 of <class 'glasses.models.classification.vit.ViT'>>, 'vit_huge_patch16_224': <bound method ViT.vit_huge_patch16_224 of <class 'glasses.models.classification.vit.ViT'>>, 'vit_huge_patch32_384': <bound method ViT.vit_huge_patch32_384 of <class 'glasses.models.classification.vit.ViT'>>, 'vit_large_patch16_224': <bound method ViT.vit_large_patch16_224 of <class 'glasses.models.classification.vit.ViT'>>, 'vit_large_patch16_384': <bound method ViT.vit_large_patch16_384 of <class 'glasses.models.classification.vit.ViT'>>, 'vit_large_patch32_384': <bound method ViT.vit_large_patch32_384 of <class 'glasses.models.classification.vit.ViT'>>, 'vit_small_patch16_224': <bound method ViT.vit_small_patch16_224 of <class 'glasses.models.classification.vit.ViT'>>, 'wide_resnet101_2': <bound method WideResNet.wide_resnet101_2 of <class 'glasses.models.classification.wide_resnet.WideResNet'>>, 'wide_resnet50_2': <bound method WideResNet.wide_resnet50_2 of <class 'glasses.models.classification.wide_resnet.WideResNet'>>}¶

glasses.models.AutoTransform module¶

- class glasses.models.AutoTransform.AutoTransform[source]¶

Bases:

object- static from_name(name: str) -> functools.partial(<class 'glasses.models.AutoTransform.Transform'>, input_size=224, resize=256, mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250]))[source]¶

Returns a ImageNetTransformuration from a given model name. If the name is not found, it returns a default one.

Examples

>>> AutoTransform.from_name('resnet18')

You can access the preprocess transformation, you should use it to preprocess your inputs.

>>> tr = AutoTransform.from_name('resnet18')

- Parameters

name (str) – [description]

- Returns

The model’s config

- Return type

Config

- zoo = {'default': Transform( Resize(size=256, interpolation=bilinear, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'deit_base_patch16_224': Transform( Resize(size=224, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'deit_base_patch16_384': Transform( Resize(size=384, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(384, 384)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'deit_small_patch16_224': Transform( Resize(size=224, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'deit_tiny_patch16_224': Transform( Resize(size=224, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'eca_resnet101d': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'eca_resnet101t': Transform( Resize(size=320, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(320, 320)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'eca_resnet18d': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'eca_resnet26d': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'eca_resnet26t': Transform( Resize(size=320, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(320, 320)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'eca_resnet50d': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'eca_resnet50t': Transform( Resize(size=320, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(320, 320)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_b0': Transform( Resize(size=224, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_b1': Transform( Resize(size=240, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(240, 240)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_b2': Transform( Resize(size=260, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(260, 260)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_b3': Transform( Resize(size=300, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(300, 300)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_b4': Transform( Resize(size=380, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(380, 380)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_b5': Transform( Resize(size=456, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(456, 456)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_b6': Transform( Resize(size=528, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(528, 528)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_b7': Transform( Resize(size=600, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(600, 600)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_b8': Transform( Resize(size=672, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(672, 672)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_l2': Transform( Resize(size=800, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(800, 800)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'efficientnet_lite0': Transform( Resize(size=224, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'efficientnet_lite1': Transform( Resize(size=240, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(240, 240)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'efficientnet_lite2': Transform( Resize(size=260, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(260, 260)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'efficientnet_lite3': Transform( Resize(size=280, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(280, 280)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'efficientnet_lite4': Transform( Resize(size=300, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(300, 300)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'regnetx_002': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnetx_004': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnetx_006': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnetx_008': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnetx_016': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnetx_032': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnetx_040': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnetx_064': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnetx_080': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnety_002': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnety_004': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnety_006': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnety_008': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnety_016': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnety_032': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnety_040': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnety_064': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'regnety_080': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'resnest200e': Transform( Resize(size=320, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(320, 320)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'resnest269e': Transform( Resize(size=461, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(416, 416)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'resnet26': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'resnet26d': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'resnet50d': Transform( Resize(size=256, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=tensor([0.4850, 0.4560, 0.4060]), std=tensor([0.2290, 0.2240, 0.2250])) ), 'unet': Transform( Resize(size=384, interpolation=bilinear, max_size=None, antialias=None) CenterCrop(size=(384, 384)) ToTensor() ), 'vit_base_patch16_224': Transform( Resize(size=224, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'vit_base_patch16_384': Transform( Resize(size=384, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(384, 384)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'vit_base_patch32_384': Transform( Resize(size=384, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(384, 384)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'vit_huge_patch16_224': Transform( Resize(size=224, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'vit_huge_patch32_384': Transform( Resize(size=384, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(384, 384)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'vit_large_patch16_224': Transform( Resize(size=224, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'vit_large_patch16_384': Transform( Resize(size=384, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(384, 384)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'vit_large_patch32_384': Transform( Resize(size=384, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(384, 384)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) ), 'vit_small_patch16_224': Transform( Resize(size=224, interpolation=bicubic, max_size=None, antialias=None) CenterCrop(size=(224, 224)) ToTensor() Normalize(mean=(0.5, 0.5, 0.5), std=(0.5, 0.5, 0.5)) )}¶

- class glasses.models.AutoTransform.Transform(input_size: int, resize: int, std: Tuple[float], mean: Tuple[float], interpolation: str = 'bilinear', transforms: List[Callable] = [])[source]¶

Bases:

torchvision.transforms.transforms.Compose- interpolations = {'bicubic': InterpolationMode.BICUBIC, 'bilinear': InterpolationMode.BILINEAR}¶

Module contents¶

- class glasses.models.AlexNet(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.alexnet.AlexNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.alexnet.AlexNetHead'>, **kwargs)[source]¶

Bases:

glasses.models.classification.base.ClassificationModuleImplementation of AlexNet proposed in ImageNet Classification with Deep Convolutional Neural Networks, according to the variation implemented in torchvision.

net = AlexNet()

Examples

# change activation AlexNet(activation = nn.SELU) # change number of classes (default is 1000 ) AlexNet(n_classes=100) # pass a different block AlexNet(block=SENetBasicBlock)

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Default is 3.

n_classes (int, optional) – Number of classes. Default is 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- training: bool¶

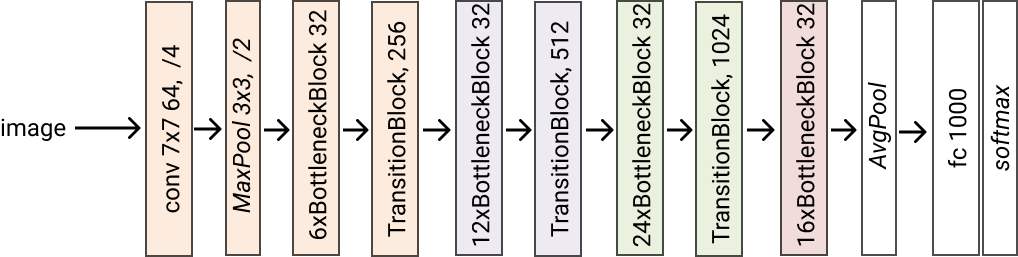

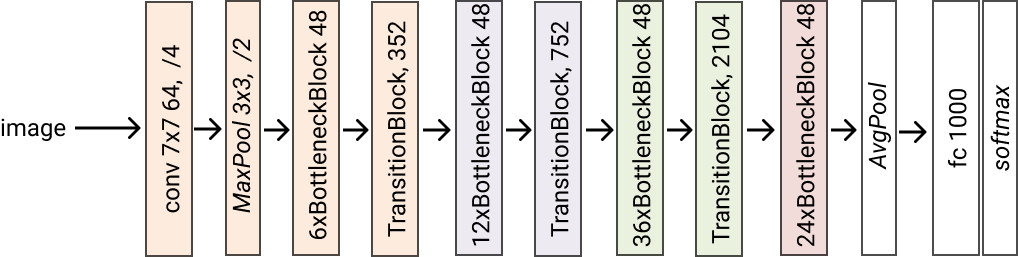

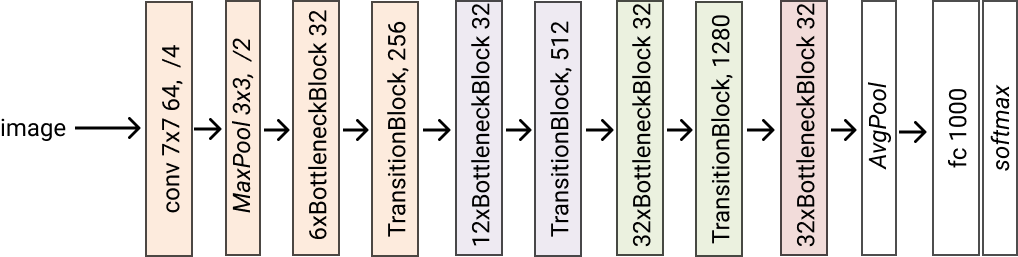

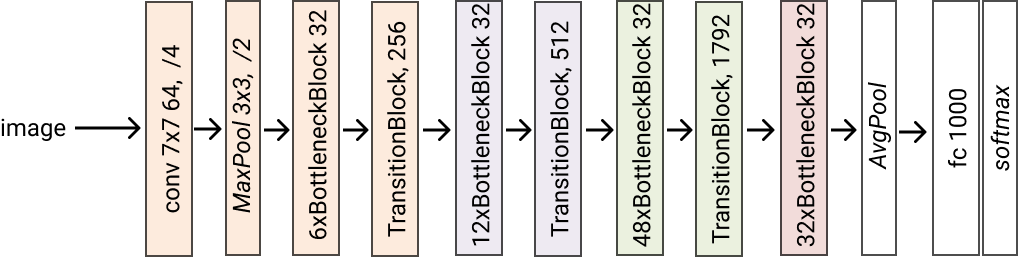

- class glasses.models.DenseNet(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.densenet.DenseNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.resnet.ResNetHead'>, *args, **kwargs)[source]¶

Bases:

glasses.models.classification.base.ClassificationModuleImplementation of DenseNet proposed in Densely Connected Convolutional Networks

Create a default models

DenseNet.densenet121() DenseNet.densenet161() DenseNet.densenet169() DenseNet.densenet201()

Examples

# change activation DenseNet.densenet121(activation = nn.SELU) # change number of classes (default is 1000 ) DenseNet.densenet121(n_classes=100) # pass a different block DenseNet.densenet121(block=...) # change the initial convolution model = DenseNet.densenet121() model.encoder.gate.conv1 = nn.Conv2d(3, 64, kernel_size=3) # store each feature x = torch.rand((1, 3, 224, 224)) model = DenseNet.densenet121() # first call .features, this will activate the forward hooks and tells the model you'll like to get the features model.encoder.features model(torch.randn((1,3,224,224))) # get the features from the encoder features = model.encoder.features print([x.shape for x in features]) # [torch.Size([1, 128, 28, 28]), torch.Size([1, 256, 14, 14]), torch.Size([1, 512, 7, 7]), torch.Size([1, 1024, 7, 7])]

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- classmethod densenet121(*args, **kwargs) glasses.models.classification.densenet.DenseNet[source]¶

Creates a densenet121 model. Grow rate is set to 32

- Returns

A densenet121 model

- Return type

- classmethod densenet161(*args, **kwargs) glasses.models.classification.densenet.DenseNet[source]¶

Creates a densenet161 model. Grow rate is set to 48

- Returns

A densenet161 model

- Return type

- classmethod densenet169(*args, **kwargs) glasses.models.classification.densenet.DenseNet[source]¶

Creates a densenet169 model. Grow rate is set to 32

- Returns

A densenet169 model

- Return type

- classmethod densenet201(*args, **kwargs) glasses.models.classification.densenet.DenseNet[source]¶

Creates a densenet201 model. Grow rate is set to 32

- Returns

A densenet201 model

- Return type

- forward(x: torch.Tensor) torch.Tensor[source]¶

Defines the computation performed at every call.

Should be overridden by all subclasses.

Note

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the registered hooks while the latter silently ignores them.

- training: bool¶

- class glasses.models.EfficientNet(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.efficientnet.EfficientNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.efficientnet.EfficientNetHead'>, *args, **kwargs)[source]¶

Bases:

glasses.models.classification.base.ClassificationModuleImplementation of EfficientNet proposed in EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks

The basic architecture is similar to MobileNetV2 as was computed by using Progressive Neural Architecture Search .

The following table shows the basic architecture (EfficientNet-efficientnet_b0):

Then, the architecture is scaled up from -efficientnet_b0 to -efficientnet_b7 using compound scaling.

EfficientNet.efficientnet_b0() EfficientNet.efficientnet_b1() EfficientNet.efficientnet_b2() EfficientNet.efficientnet_b3() EfficientNet.efficientnet_b4() EfficientNet.efficientnet_b5() EfficientNet.efficientnet_b6() EfficientNet.efficientnet_b7() EfficientNet.efficientnet_b8() EfficientNet.efficientnet_l2()

Examples

EfficientNet.efficientnet_b0(activation = nn.SELU) # change number of classes (default is 1000 ) EfficientNet.efficientnet_b0(n_classes=100) # pass a different block EfficientNet.efficientnet_b0(block=...) # store each feature x = torch.rand((1, 3, 224, 224)) model = EfficientNet.efficientnet_b0() # first call .features, this will activate the forward hooks and tells the model you'll like to get the features model.encoder.features model(torch.randn((1,3,224,224))) # get the features from the encoder features = model.encoder.features print([x.shape for x in features]) # [torch.Size([1, 32, 112, 112]), torch.Size([1, 24, 56, 56]), torch.Size([1, 40, 28, 28]), torch.Size([1, 80, 14, 14])]

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- default_depths: List[int] = [1, 2, 2, 3, 3, 4, 1]¶

- default_widths: List[int] = [32, 16, 24, 40, 80, 112, 192, 320, 1280]¶

- classmethod efficientnet_b0(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_b1(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_b2(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_b3(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_b4(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_b5(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_b6(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_b7(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_b8(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_l2(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod from_config(config, key, *args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- models_config = {'efficientnet_b0': (1.0, 1.0, 0.2), 'efficientnet_b1': (1.0, 1.1, 0.2), 'efficientnet_b2': (1.1, 1.2, 0.3), 'efficientnet_b3': (1.2, 1.4, 0.3), 'efficientnet_b4': (1.4, 1.8, 0.4), 'efficientnet_b5': (1.6, 2.2, 0.4), 'efficientnet_b6': (1.8, 2.6, 0.5), 'efficientnet_b7': (2.0, 3.1, 0.5), 'efficientnet_b8': (2.2, 3.6, 0.5), 'efficientnet_l2': (4.3, 5.3, 0.5)}¶

- class glasses.models.EfficientNetLite(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.efficientnet.EfficientNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.efficientnet.EfficientNetHead'>, *args, **kwargs)[source]¶

Bases:

glasses.models.classification.efficientnet.EfficientNetImplementations of EfficientNetLite proposed in Higher accuracy on vision models with EfficientNet-Lite

Main differences from the EfficientNet implementation are:

Removed squeeze-and-excitation networks since they are not well supported

Replaced all swish activations with RELU6, which significantly improved the quality of post-training quantization (explained later)

Fixed the stem and head while scaling models up in order to reduce the size and computations of scaled models

Examples

Create a default model

>>> EfficientNetLite.efficientnet_lite0() >>> EfficientNetLite.efficientnet_lite1() >>> EfficientNetLite.efficientnet_lite2() >>> EfficientNetLite.efficientnet_lite3() >>> EfficientNetLite.efficientnet_lite4()

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- classmethod efficientnet_lite0(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_lite1(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_lite2(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_lite3(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod efficientnet_lite4(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- classmethod from_config(config, key, *args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- models_config = {'efficientnet_lite0': (1.0, 1.0, 0.2), 'efficientnet_lite1': (1.0, 1.1, 0.2), 'efficientnet_lite2': (1.1, 1.2, 0.3), 'efficientnet_lite3': (1.2, 1.4, 0.3), 'efficientnet_lite4': (1.4, 1.8, 0.3)}¶

- training: bool¶

- class glasses.models.FishNet(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.fishnet.FishNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.fishnet.FishNetHead'>, *args, **kwargs)[source]¶

Bases:

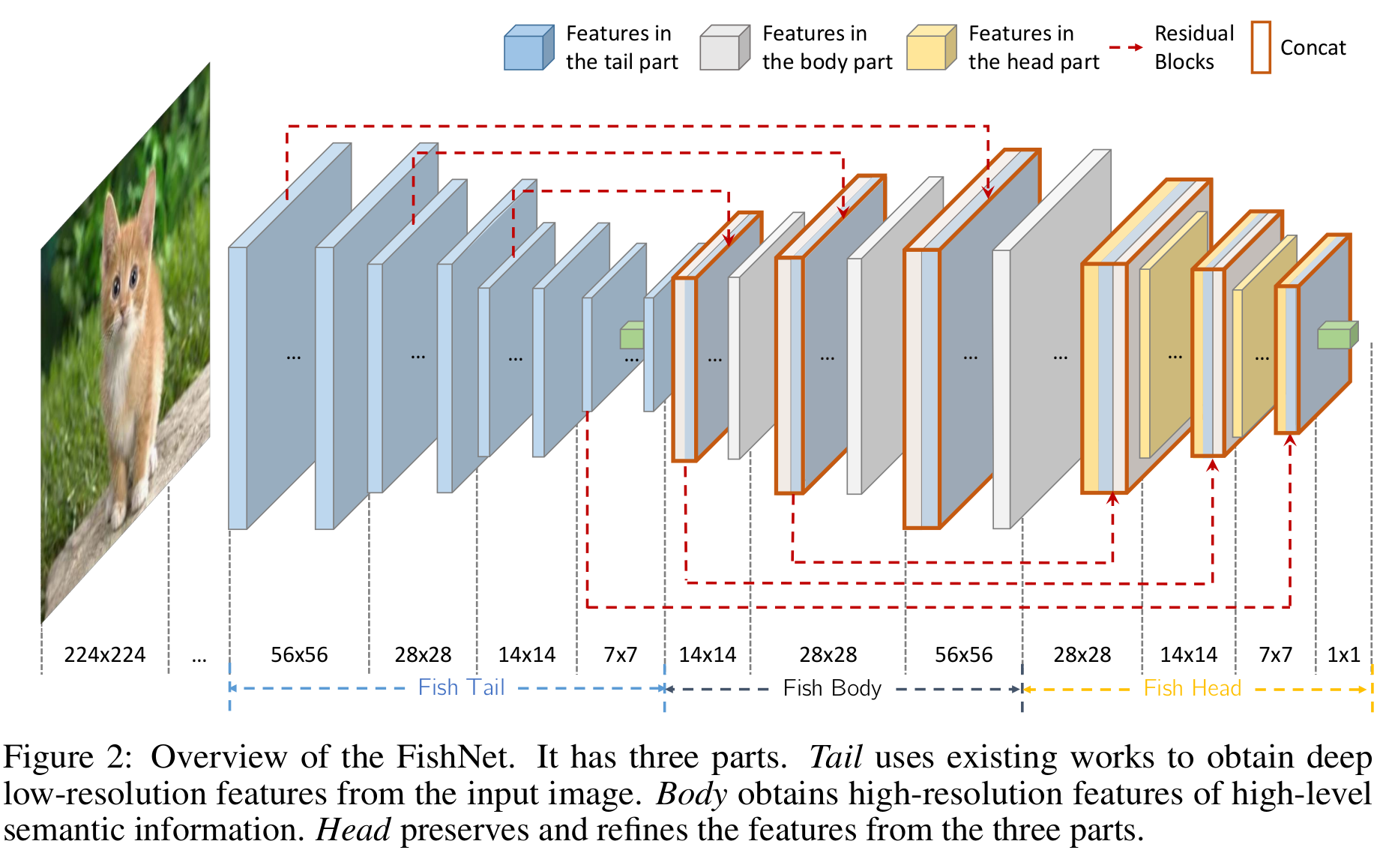

glasses.models.classification.base.ClassificationModuleImplementation of ResNet proposed in FishNet: A Versatile Backbone for Image, Region, and Pixel Level Prediction

Honestly, this model it is very weird and it has some mistakes in the paper that nobody ever cared to correct. It is a nice idea, but it could have been described better and definitly implemented better. The author’s code is terrible, I have based mostly of my implemente on this amazing repo Fishnet-PyTorch.

The following image is taken from the paper and shows the architecture detail.

FishNet.fishnet99() FishNet.fishnet150()

Examples

FishNet.fishnet99(activation = nn.SELU) # change number of classes (default is 1000 ) FishNet.fishnet99(n_classes=100) # pass a different block block = lambda in_ch, out_ch, **kwargs: nn.Sequential(FishNetBottleNeck(in_ch, out_ch), SpatialSE(out_ch)) FishNet.fishnet99(block=block)

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- classmethod fishnet150(*args, **kwargs) glasses.models.classification.fishnet.FishNet[source]¶

Return a fishnet150 model

- Returns

[description]

- Return type

- classmethod fishnet99(*args, **kwargs) glasses.models.classification.fishnet.FishNet[source]¶

Return a fishnet99 model

- Returns

[description]

- Return type

- training: bool¶

- class glasses.models.MobileNet(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.efficientnet.EfficientNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.efficientnet.EfficientNetHead'>, *args, **kwargs)[source]¶

Bases:

glasses.models.classification.efficientnet.EfficientNetImplementation of MobileNet v2 proposed in MobileNetV2: Inverted Residuals and Linear Bottlenecks

MobileNet is a special case of EfficientNet.

MobileNet.mobilenet_v2()

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- classmethod mobilenet_v2(*args, **kwargs) glasses.models.classification.efficientnet.EfficientNet[source]¶

- training: bool¶

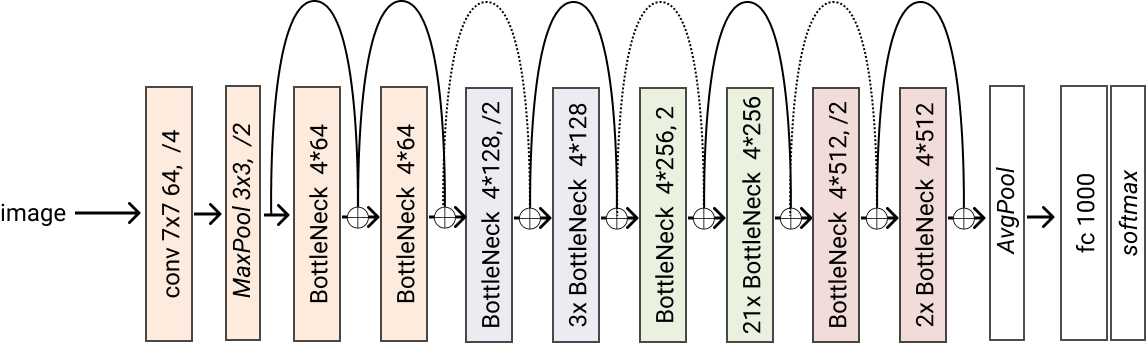

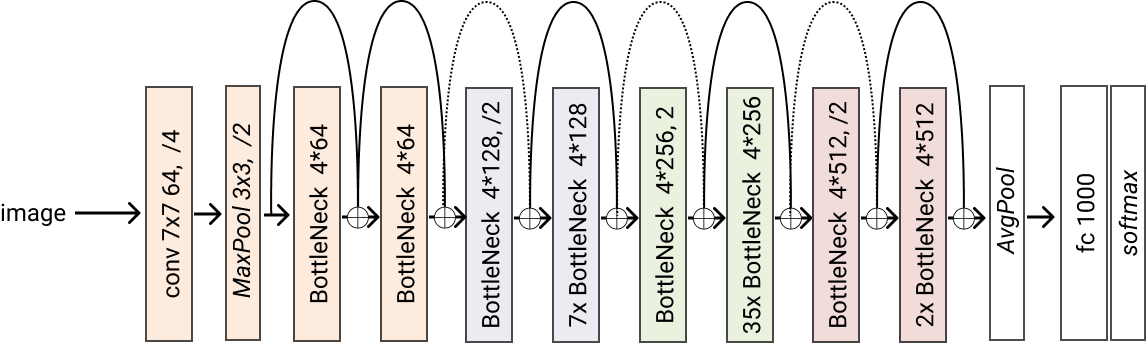

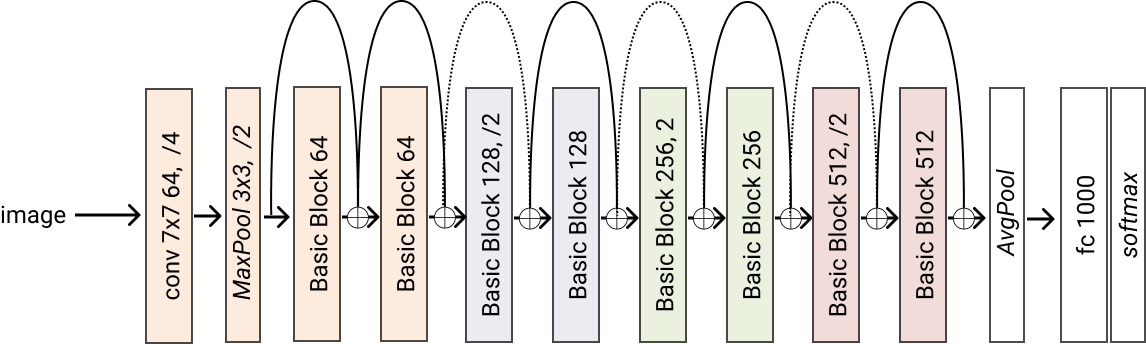

- class glasses.models.ResNet(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.resnet.ResNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.resnet.ResNetHead'>, **kwargs)[source]¶

Bases:

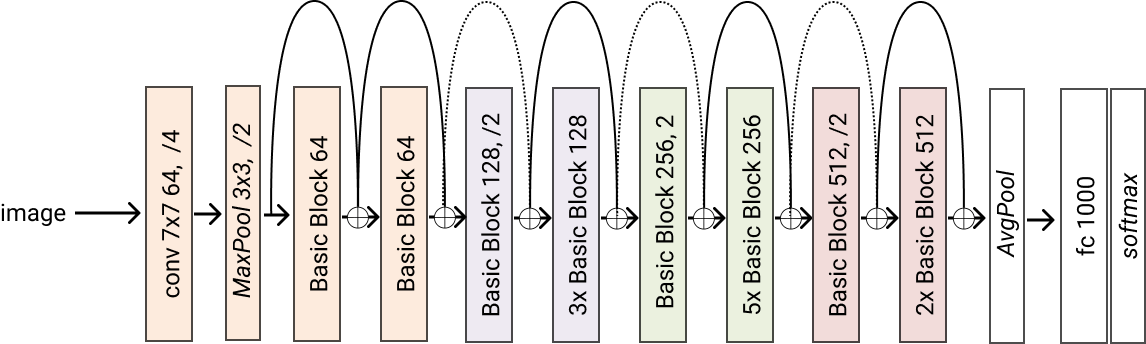

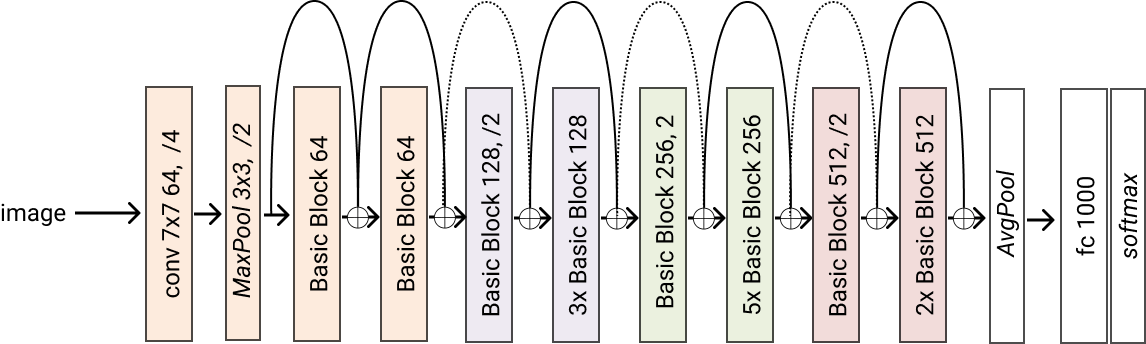

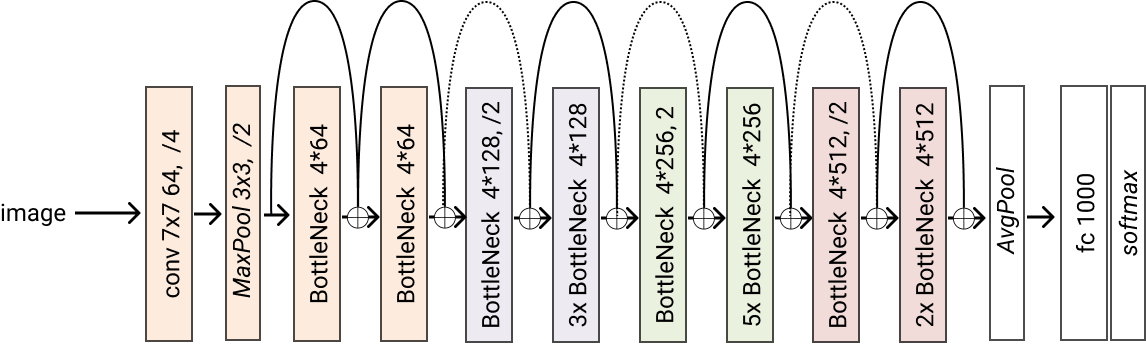

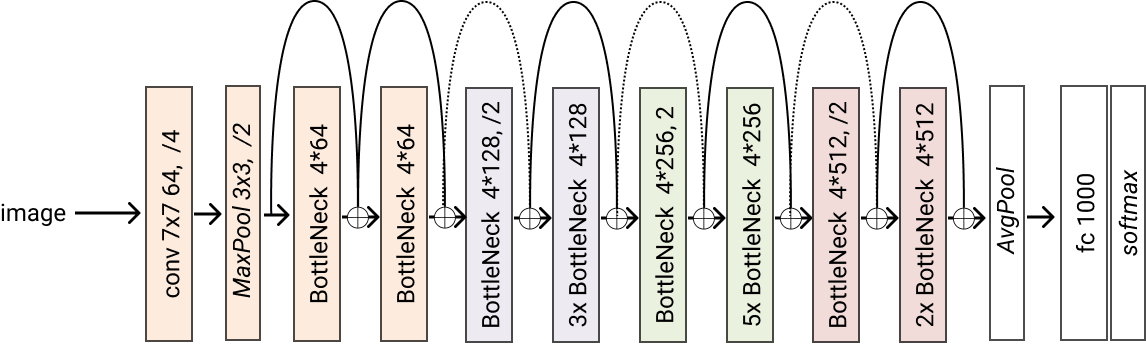

glasses.models.classification.base.ClassificationModuleImplementation of ResNet proposed in Deep Residual Learning for Image Recognition

ResNet.resnet18() ResNet.resnet26() ResNet.resnet34() ResNet.resnet50() ResNet.resnet101() ResNet.resnet152() ResNet.resnet200() Variants (d) proposed in `Bag of Tricks for Image Classification with Convolutional Neural Networks <https://arxiv.org/pdf/1812.01187.pdf>`_ ResNet.resnet26d() ResNet.resnet34d() ResNet.resnet50d() # You can construct your own one by chaning `stem` and `block` resnet101d = ResNet.resnet101(stem=ResNetStemC, block=partial(ResNetBottleneckBlock, shortcut=ResNetShorcutD))

Examples

# change activation ResNet.resnet18(activation = nn.SELU) # change number of classes (default is 1000 ) ResNet.resnet18(n_classes=100) # pass a different block ResNet.resnet18(block=SENetBasicBlock) # change the steam model = ResNet.resnet18(stem=ResNetStemC) change shortcut model = ResNet.resnet18(block=partial(ResNetBasicBlock, shortcut=ResNetShorcutD)) # store each feature x = torch.rand((1, 3, 224, 224)) # get features model = ResNet.resnet18() # first call .features, this will activate the forward hooks and tells the model you'll like to get the features model.encoder.features model(torch.randn((1,3,224,224))) # get the features from the encoder features = model.encoder.features print([x.shape for x in features]) #[torch.Size([1, 64, 112, 112]), torch.Size([1, 64, 56, 56]), torch.Size([1, 128, 28, 28]), torch.Size([1, 256, 14, 14])]

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- classmethod resnet101(*args, block=<class 'glasses.models.classification.resnet.ResNetBottleneckBlock'>, **kwargs) glasses.models.classification.resnet.ResNet[source]¶

Creates a resnet101 model

- Returns

A resnet101 model

- Return type

- classmethod resnet152(*args, block=<class 'glasses.models.classification.resnet.ResNetBottleneckBlock'>, **kwargs) glasses.models.classification.resnet.ResNet[source]¶

Creates a resnet152 model

- Returns

A resnet152 model

- Return type

- classmethod resnet18(*args, block=<class 'glasses.models.classification.resnet.ResNetBasicBlock'>, **kwargs) glasses.models.classification.resnet.ResNet[source]¶

Creates a resnet18 model

- Returns

A resnet18 model

- Return type

- classmethod resnet200(*args, block=<class 'glasses.models.classification.resnet.ResNetBottleneckBlock'>, **kwargs)[source]¶

Creates a resnet200 model

- Returns

A resnet200 model

- Return type

- classmethod resnet26(*args, block=<class 'glasses.models.classification.resnet.ResNetBottleneckBlock'>, **kwargs) glasses.models.classification.resnet.ResNet[source]¶

Creates a resnet26 model

- Returns

A resnet26 model

- Return type

- classmethod resnet26d(*args, block=<class 'glasses.models.classification.resnet.ResNetBottleneckBlock'>, **kwargs) glasses.models.classification.resnet.ResNet[source]¶

Creates a resnet26d model

- Returns

A resnet26d model

- Return type

- classmethod resnet34(*args, block=<class 'glasses.models.classification.resnet.ResNetBasicBlock'>, **kwargs) glasses.models.classification.resnet.ResNet[source]¶

Creates a resnet34 model

- Returns

A resnet34 model

- Return type

- classmethod resnet34d(*args, block=<class 'glasses.models.classification.resnet.ResNetBasicBlock'>, **kwargs) glasses.models.classification.resnet.ResNet[source]¶

Creates a resnet34 model

- Returns

A resnet34 model

- Return type

- classmethod resnet50(*args, block=<class 'glasses.models.classification.resnet.ResNetBottleneckBlock'>, **kwargs) glasses.models.classification.resnet.ResNet[source]¶

Creates a resnet50 model

- Returns

A resnet50 model

- Return type

- classmethod resnet50d(*args, block=<class 'glasses.models.classification.resnet.ResNetBottleneckBlock'>, **kwargs) glasses.models.classification.resnet.ResNet[source]¶

Creates a resnet50d model

- Returns

A resnet50d model

- Return type

- training: bool¶

- class glasses.models.ResNetXt(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.resnet.ResNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.resnet.ResNetHead'>, **kwargs)[source]¶

Bases:

glasses.models.classification.resnet.ResNetImplementation of ResNetXt proposed in “Aggregated Residual Transformation for Deep Neural Networks”

Create a default model

ResNetXt.resnext50_32x4d() ResNetXt.resnext101_32x8d() # create a resnetxt18_32x4d ResNetXt.resnet18(block=ResNetXtBottleNeckBlock, groups=32, base_width=4)

Examples

# change activation ResNetXt.resnext50_32x4d(activation = nn.SELU) # change number of classes (default is 1000 ) ResNetXt.resnext50_32x4d(n_classes=100) # pass a different block ResNetXt.resnext50_32x4d(block=SENetBasicBlock) # change the initial convolution model = ResNetXt.resnext50_32x4d model.encoder.gate.conv1 = nn.Conv2d(3, 64, kernel_size=3) # store each feature x = torch.rand((1, 3, 224, 224)) model = ResNetXt.resnext50_32x4d() # first call .features, this will activate the forward hooks and tells the model you'll like to get the features model.encoder.features model(torch.randn((1,3,224,224))) # get the features from the encoder features = model.encoder.features print([x.shape for x in features]) #[torch.Size([1, 64, 112, 112]), torch.Size([1, 64, 56, 56]), torch.Size([1, 128, 28, 28]), torch.Size([1, 256, 14, 14])]

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- classmethod resnext101_32x16d(*args, **kwargs) glasses.models.classification.resnetxt.ResNetXt[source]¶

Creates a resnext101_32x16d model

- Returns

A resnext101_32x16d model

- Return type

- classmethod resnext101_32x32d(*args, **kwargs) glasses.models.classification.resnetxt.ResNetXt[source]¶

Creates a resnext101_32x32d model

- Returns

A resnext101_32x32d model

- Return type

- classmethod resnext101_32x48d(*args, **kwargs) glasses.models.classification.resnetxt.ResNetXt[source]¶

Creates a resnext101_32x48d model

- Returns

A resnext101_32x48d model

- Return type

- classmethod resnext101_32x8d(*args, **kwargs) glasses.models.classification.resnetxt.ResNetXt[source]¶

Creates a resnext101_32x8d model

- Returns

A resnext101_32x8d model

- Return type

- classmethod resnext50_32x4d(*args, **kwargs) glasses.models.classification.resnetxt.ResNetXt[source]¶

Creates a resnext50_32x4d model

- Returns

A resnext50_32x4d model

- Return type

- training: bool¶

- class glasses.models.SEResNet(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.resnet.ResNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.resnet.ResNetHead'>, **kwargs)[source]¶

Bases:

glasses.models.classification.resnet.ResNetImplementation of Squeeze and Excitation ResNet using booth the original spatial se and the channel se proposed in Concurrent Spatial and Channel ‘Squeeze & Excitation’ in Fully Convolutional Networks The models with the channel se are labeldb with prefix c

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- classmethod se_resnet101(*args, **kwargs) glasses.models.classification.senet.SEResNet[source]¶

Original SE resnet101 with Spatial Squeeze and Excitation

- Returns

[description]

- Return type

- classmethod se_resnet152(*args, **kwargs) glasses.models.classification.senet.SEResNet[source]¶

Original SE resnet152 with Spatial Squeeze and Excitation

- Returns

[description]

- Return type

- classmethod se_resnet18(*args, **kwargs) glasses.models.classification.senet.SEResNet[source]¶

Original SE resnet18 with Spatial Squeeze and Excitation

- Returns

[description]

- Return type

- classmethod se_resnet34(*args, **kwargs) glasses.models.classification.senet.SEResNet[source]¶

Original SE resnet34 with Spatial Squeeze and Excitation

- Returns

[description]

- Return type

- classmethod se_resnet50(*args, **kwargs) glasses.models.classification.senet.SEResNet[source]¶

Original SE resnet50 with Spatial Squeeze and Excitation

- Returns

[description]

- Return type

- training: bool¶

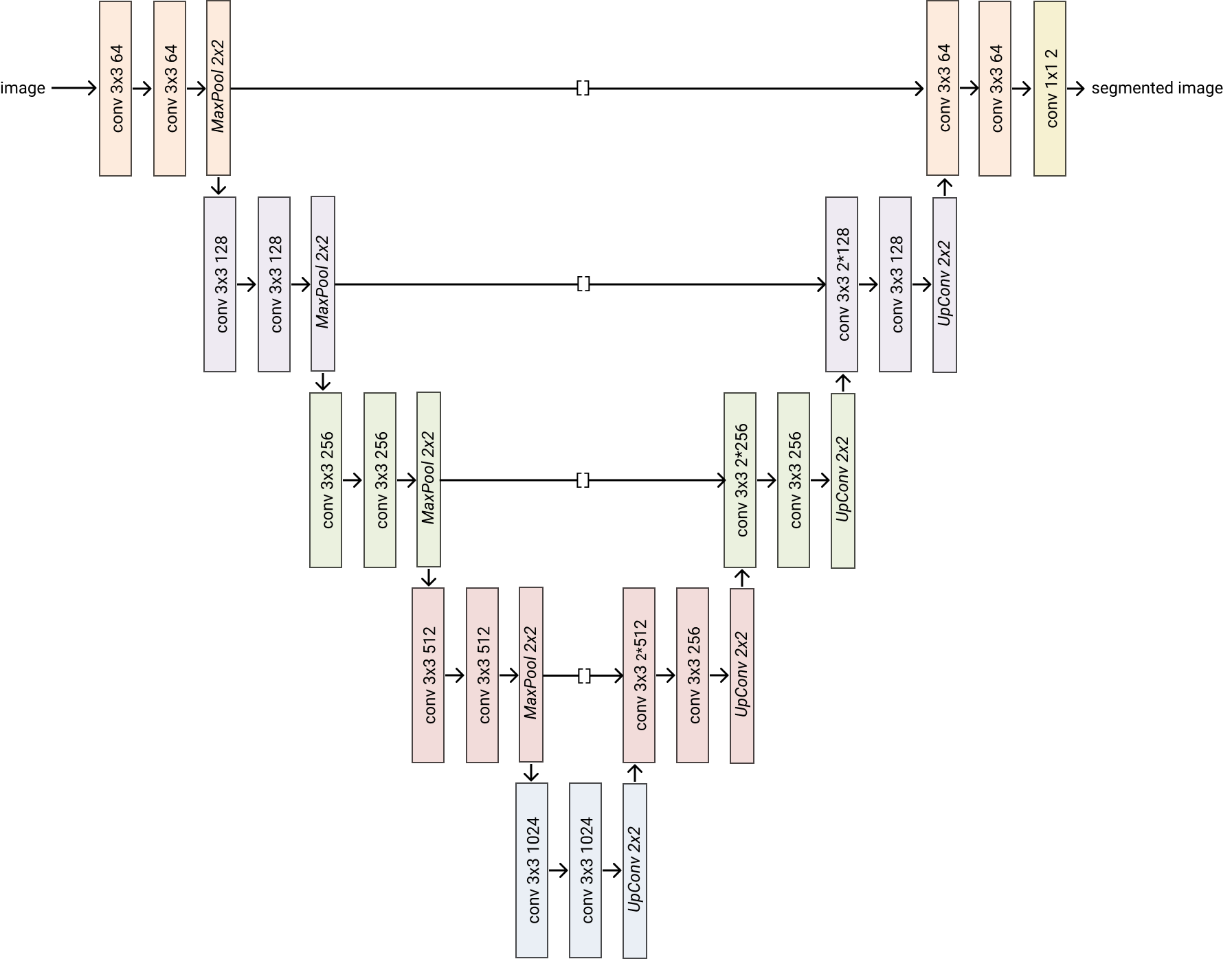

- class glasses.models.UNet(in_channels: int = 1, n_classes: int = 2, encoder: glasses.models.base.Encoder = <class 'glasses.models.segmentation.unet.UNetEncoder'>, decoder: torch.nn.modules.module.Module = <class 'glasses.models.segmentation.unet.UNetDecoder'>, **kwargs)[source]¶

Bases:

glasses.models.segmentation.base.SegmentationModuleImplementation of Unet proposed in U-Net: Convolutional Networks for Biomedical Image Segmentation

Examples

Default models

>>> UNet()

You can easily customize your model

>>> # change activation >>> UNet(activation=nn.SELU) >>> # change number of classes (default is 2 ) >>> UNet(n_classes=2) >>> # change encoder >>> unet = UNet(encoder=lambda *args, **kwargs: ResNet.resnet26(*args, **kwargs).encoder,) >>> unet = UNet(encoder=lambda *args, **kwargs: EfficientNet.efficientnet_b2(*args, **kwargs).encoder,) >>> # change decoder >>> UNet(decoder=partial(UNetDecoder, widths=[256, 128, 64, 32, 16])) >>> # pass a different block to decoder >>> UNet(encoder=partial(UNetEncoder, block=SENetBasicBlock)) >>> # all *Decoder class can be directly used >>> unet = UNet(encoder=partial(ResNetEncoder, block=ResNetBottleneckBlock, depths=[2,2,2,2]))

- Parameters

in_channels (int, optional) – [description]. Defaults to 1.

n_classes (int, optional) – [description]. Defaults to 2.

encoder (Encoder, optional) – [description]. Defaults to UNetEncoder.

ecoder (nn.Module, optional) – [description]. Defaults to UNetDecoder.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- training: bool¶

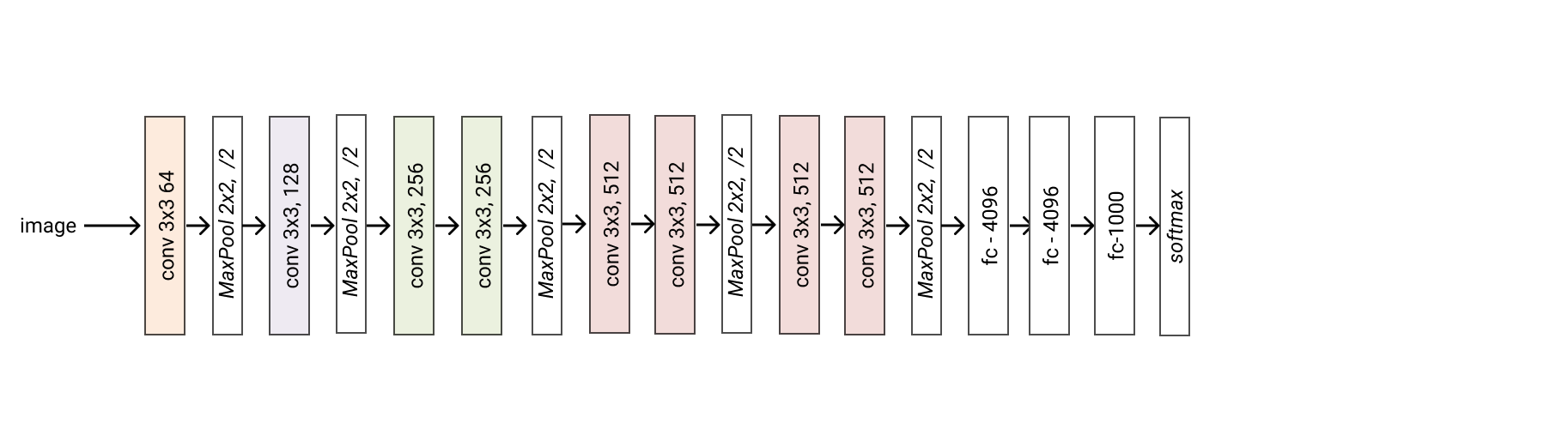

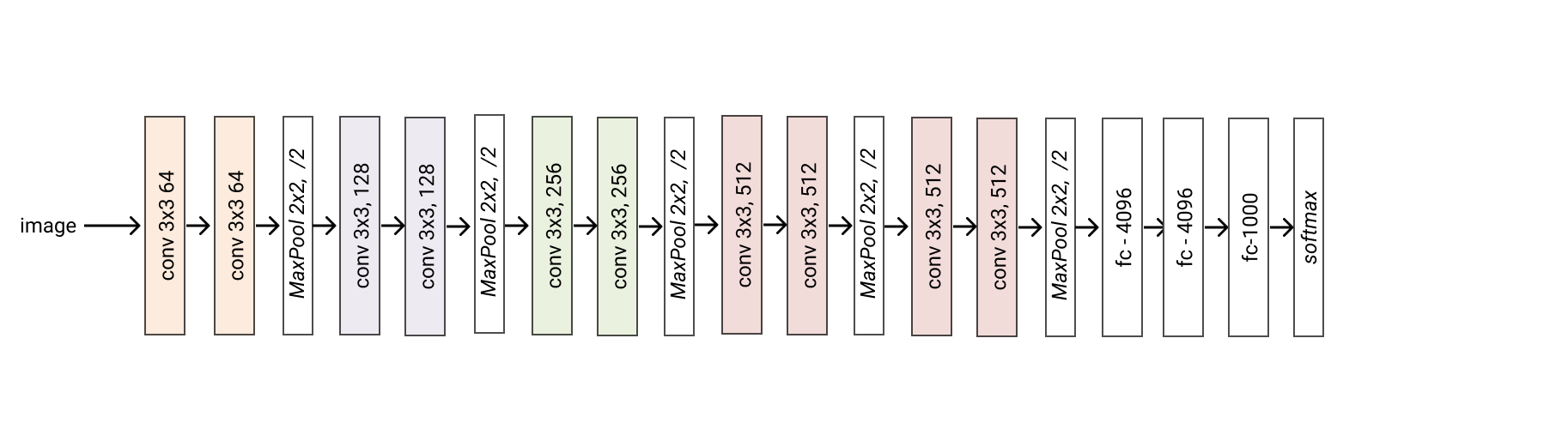

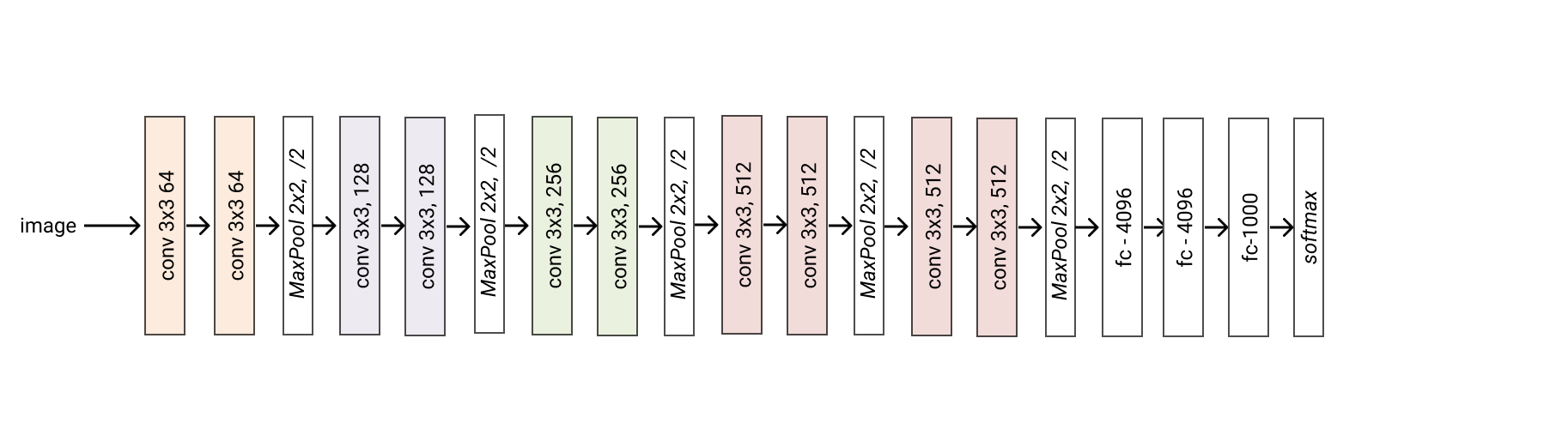

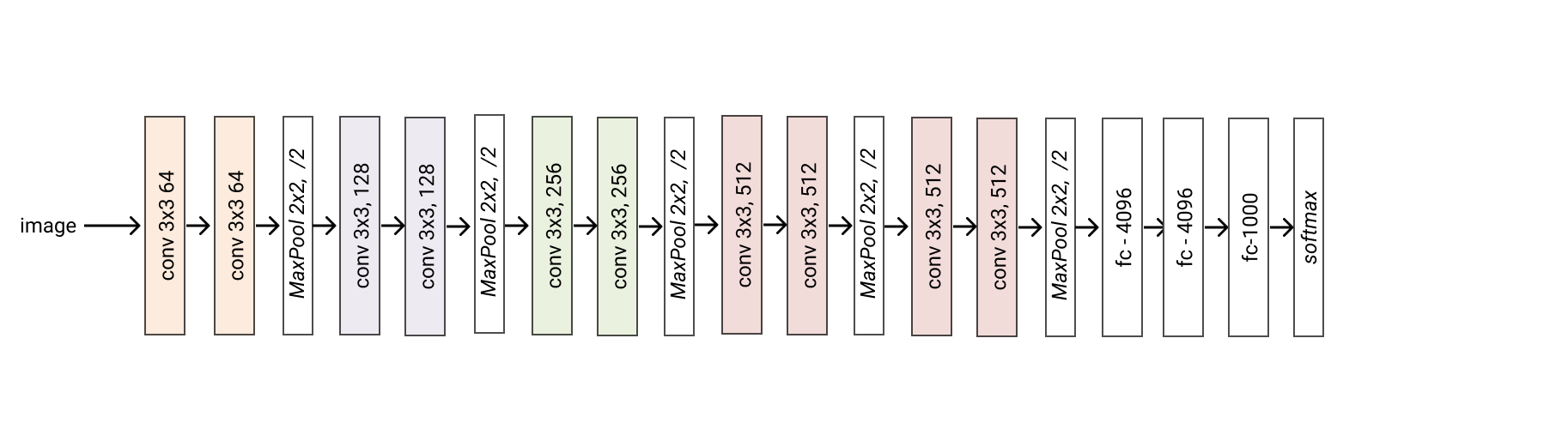

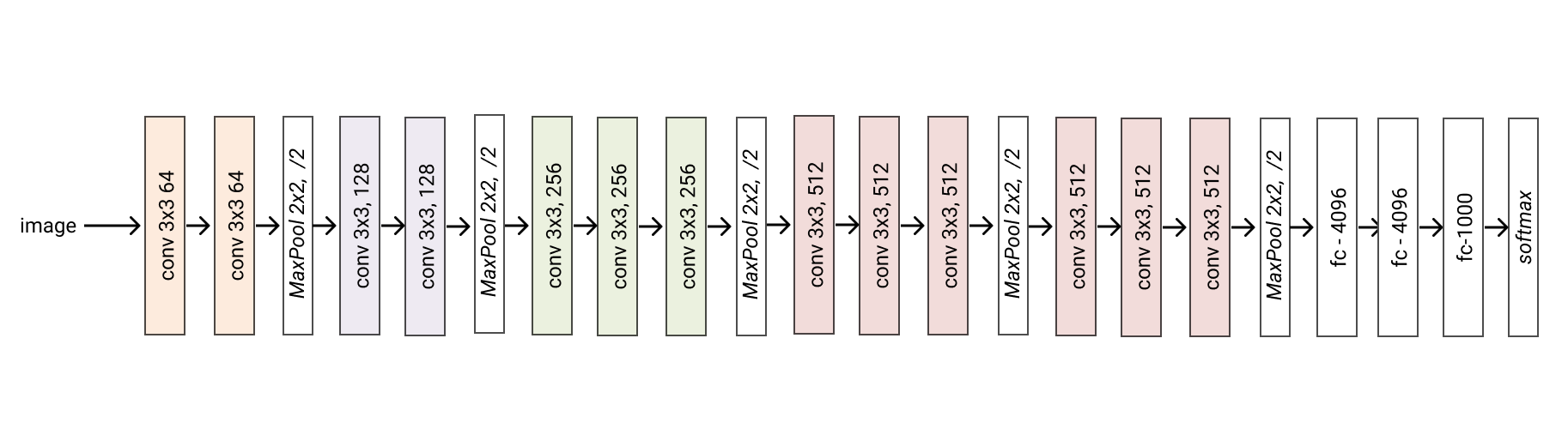

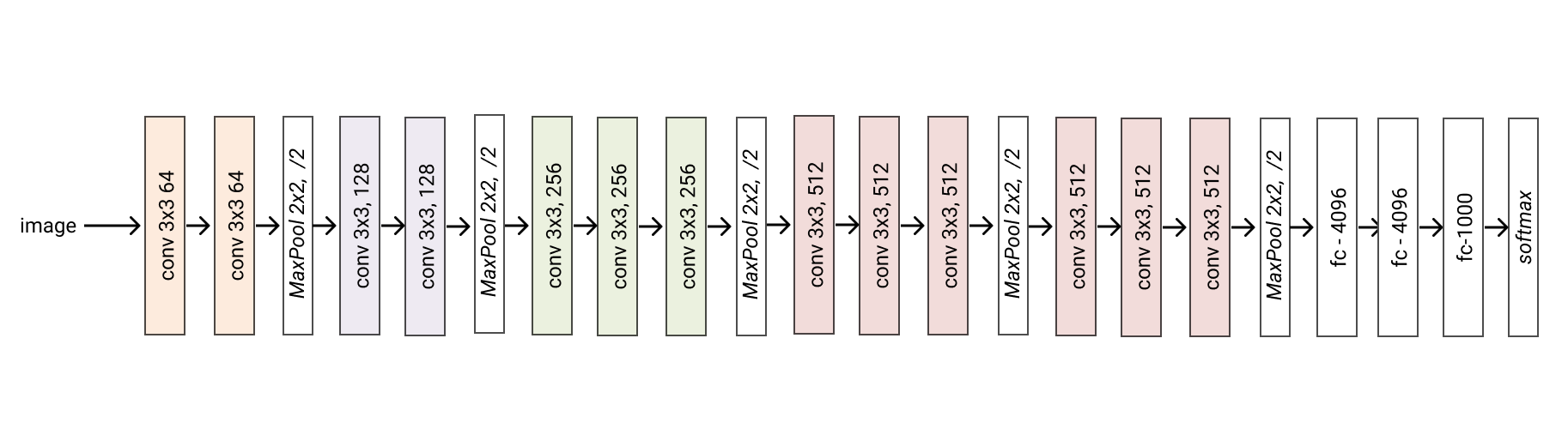

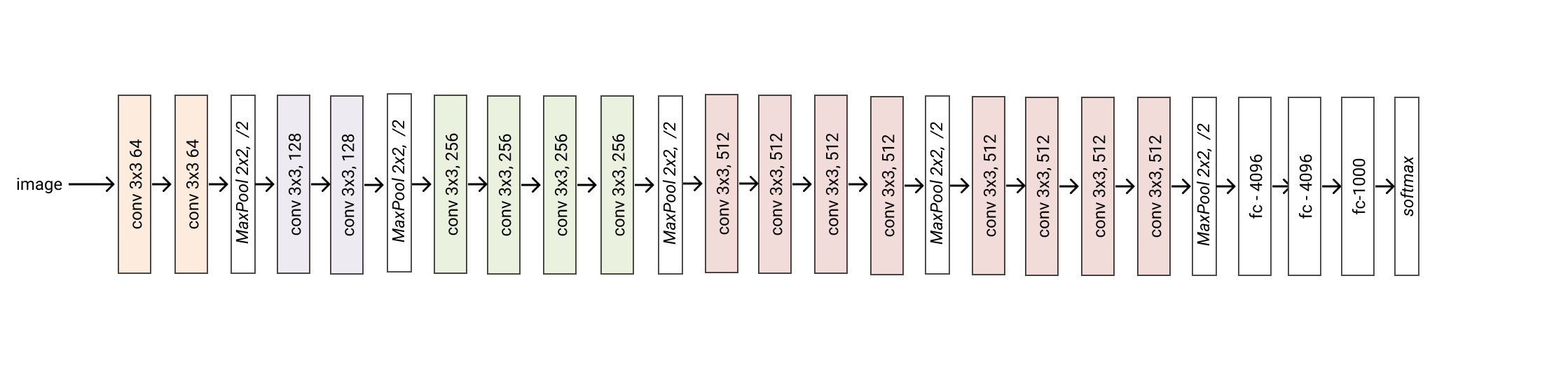

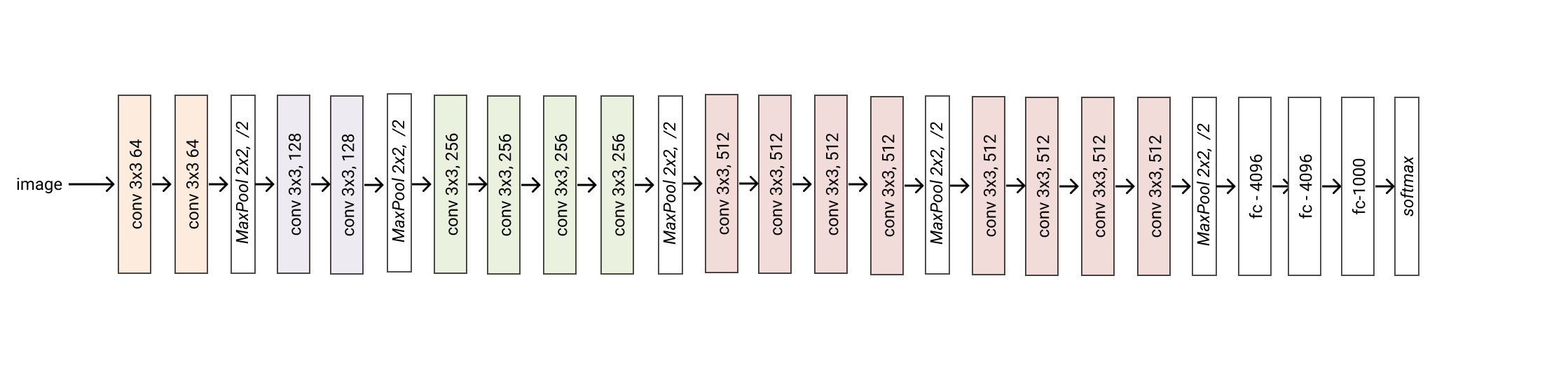

- class glasses.models.VGG(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.vgg.VGGEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.vgg.VGGHead'>, **kwargs)[source]¶

Bases:

glasses.models.classification.base.ClassificationModuleImplementation of VGG proposed in Very Deep Convolutional Networks For Large-Scale Image Recognition

VGG.vgg11() VGG.vgg13() VGG.vgg16() VGG.vgg19() VGG.vgg11_bn() VGG.vgg13_bn() VGG.vgg16_bn() VGG.vgg19_bn()

Please be aware that the bn models uses BatchNorm but they are very old and people back then don’t know the bias is superfluous in a conv followed by a batchnorm.

Examples

# change activation VGG.vgg11(activation = nn.SELU) # change number of classes (default is 1000 ) VGG.vgg11(n_classes=100) # pass a different block from nn.models.classification.senet import SENetBasicBlock VGG.vgg11(block=SENetBasicBlock) # store the features tensor after every block

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- training: bool¶

- classmethod vgg11(*args, **kwargs) glasses.models.classification.vgg.VGG[source]¶

Creates a vgg11 model

- Returns

A vgg11 model

- Return type

- classmethod vgg11_bn(*args, **kwargs) glasses.models.classification.vgg.VGG[source]¶

Creates a vgg11 model with batchnorm

- Returns

A vgg13 model

- Return type

- classmethod vgg13(*args, **kwargs) glasses.models.classification.vgg.VGG[source]¶

Creates a vgg13 model

- Returns

A vgg13 model

- Return type

- classmethod vgg13_bn(*args, **kwargs) glasses.models.classification.vgg.VGG[source]¶

Creates a vgg13 model with batchnorm

- Returns

A vgg13 model

- Return type

- classmethod vgg16(*args, **kwargs) glasses.models.classification.vgg.VGG[source]¶

Creates a vgg16 model

- Returns

A vgg16 model

- Return type

- classmethod vgg16_bn(*args, **kwargs) glasses.models.classification.vgg.VGG[source]¶

Creates a vgg16 model with batchnorm

- Returns

A vgg16 model

- Return type

- classmethod vgg19(*args, **kwargs) glasses.models.classification.vgg.VGG[source]¶

Creates a vgg19 model

- Returns

A vgg19 model

- Return type

- classmethod vgg19_bn(*args, **kwargs) glasses.models.classification.vgg.VGG[source]¶

Creates a vgg19 model with batchnorm

- Returns

A vgg19 model

- Return type

- class glasses.models.WideResNet(encoder: torch.nn.modules.module.Module = <class 'glasses.models.classification.resnet.ResNetEncoder'>, head: torch.nn.modules.module.Module = <class 'glasses.models.classification.resnet.ResNetHead'>, **kwargs)[source]¶

Bases:

glasses.models.classification.resnet.ResNetImplementation of Wide ResNet proposed in “Wide Residual Networks”

Create a default model

WideResNet.wide_resnet50_2() WideResNet.wide_resnet101_2() # create a wide_resnet18_4 WideResNet.resnet18(block=WideResNetBottleNeckBlock, width_factor=4)

Examples

# change activation WideResNet.resnext50_32x4d(activation = nn.SELU) # change number of classes (default is 1000 ) WideResNet.resnext50_32x4d(n_classes=100) # pass a different block WideResNet.resnext50_32x4d(block=SENetBasicBlock) # change the initial convolution model = WideResNet.resnext50_32x4d model.encoder.gate.conv1 = nn.Conv2d(3, 64, kernel_size=3) # store each feature x = torch.rand((1, 3, 224, 224)) model = WideResNet.wide_resnet50_2() features = [] x = model.encoder.gate(x) for block in model.encoder.layers: x = block(x) features.append(x) print([x.shape for x in features]) # [torch.Size([1, 64, 56, 56]), torch.Size([1, 128, 28, 28]), torch.Size([1, 256, 14, 14]), torch.Size([1, 512, 7, 7])]

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- training: bool¶

- classmethod wide_resnet101_2(*args, **kwargs) glasses.models.classification.wide_resnet.WideResNet[source]¶

Creates a wide_resnet50_2 model

- Returns

A wide_resnet50_2 model

- Return type

- classmethod wide_resnet50_2(*args, **kwargs) glasses.models.classification.wide_resnet.WideResNet[source]¶

Creates a wide_resnet50_2 model

- Returns

A wide_resnet50_2 model

- Return type