glasses.models.classification.regnet package¶

Module contents¶

- class glasses.models.classification.regnet.RegNet(encoder: torch.nn.modules.module.Module = functools.partial(<class 'glasses.models.classification.resnet.ResNetEncoder'>, start_features=32, stem=functools.partial(<class 'glasses.nn.blocks.ConvBnAct'>, kernel_size=3, stride=2), downsample_first=True, block=<class 'glasses.models.classification.regnet.RegNetXBotteneckBlock'>), *args, **kwargs)[source]¶

Bases:

glasses.models.classification.resnet.ResNetImplementation of RegNet proposed in Designing Network Design Spaces

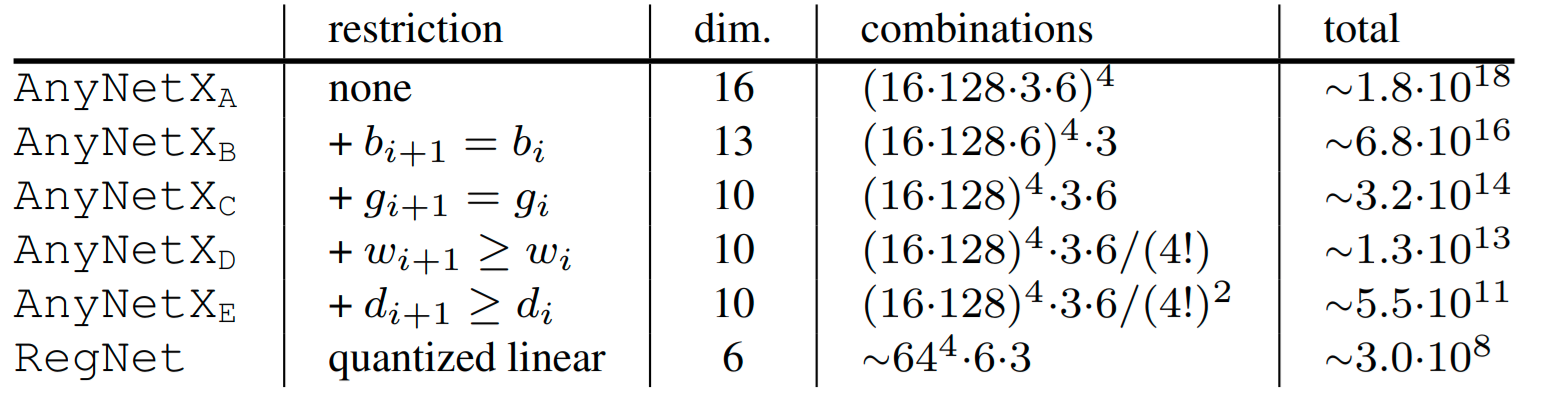

The main idea is to start with a high dimensional search space and iteratively reduce the search space by empirically apply constrains based on the best performing models sampled by the current search space.

The resulting models are light, accurate, and faster than EfficientNets (up to 5x times!)

For example, to go from \(AnyNet_A\) to \(AnyNet_B\) they fixed the bottleneck ratio \(b_i\) for all stage \(i\). The following table shows all the restrictions applied from one search space to the next one.

The paper is really well written and very interesting, I highly recommended read it.

ResNet.regnetx_002() ResNet.regnetx_004() ResNet.regnetx_006() ResNet.regnetx_008() ResNet.regnetx_016() ResNet.regnetx_040() ResNet.regnetx_064() ResNet.regnetx_080() ResNet.regnetx_120() ResNet.regnetx_160() ResNet.regnetx_320() # Y variants (with SE) ResNet.regnety_002() # ... ResNet.regnetx_320() You can easily customize your model

Examples

# change activation RegNet.regnetx_004(activation = nn.SELU) # change number of classes (default is 1000 ) RegNet.regnetx_004(n_classes=100) # pass a different block RegNet.regnetx_004(block=RegNetYBotteneckBlock) # change the steam model = RegNet.regnetx_004(stem=ResNetStemC) change shortcut model = RegNet.regnetx_004(block=partial(RegNetYBotteneckBlock, shortcut=ResNetShorcutD)) # store each feature x = torch.rand((1, 3, 224, 224)) # get features model = RegNet.regnetx_004() # first call .features, this will activate the forward hooks and tells the model you'll like to get the features model.encoder.features model(torch.randn((1,3,224,224))) # get the features from the encoder features = model.encoder.features print([x.shape for x in features]) #[torch.Size([1, 32, 112, 112]), torch.Size([1, 32, 56, 56]), torch.Size([1, 64, 28, 28]), torch.Size([1, 160, 14, 14])]

- Parameters

in_channels (int, optional) – Number of channels in the input Image (3 for RGB and 1 for Gray). Defaults to 3.

n_classes (int, optional) – Number of classes. Defaults to 1000.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- models_config = {'regnetx_002': ([1, 1, 4, 7], [24, 56, 152, 368], 8), 'regnetx_004': ([1, 2, 7, 12], [32, 64, 160, 384], 16), 'regnetx_006': ([1, 3, 5, 7], [48, 96, 240, 528], 24), 'regnetx_008': ([1, 3, 7, 5], [64, 128, 288, 672], 16), 'regnetx_016': ([2, 4, 10, 2], [72, 168, 408, 912], 24), 'regnetx_032': ([2, 6, 15, 2], [96, 192, 432, 1008], 48), 'regnetx_040': ([2, 5, 14, 2], [80, 240, 560, 1360], 40), 'regnetx_064': ([2, 4, 10, 1], [168, 392, 784, 1624], 56), 'regnetx_080': ([2, 5, 15, 1], [80, 240, 720, 1920], 80), 'regnetx_120': ([2, 5, 11, 1], [224, 448, 896, 2240], 112), 'regnetx_160': ([2, 6, 13, 1], [256, 512, 896, 2048], 128), 'regnetx_320': ([2, 7, 13, 1], [336, 672, 1344, 2520], 168), 'regnety_002': ([1, 1, 4, 7], [24, 56, 152, 368], 8), 'regnety_004': ([1, 3, 6, 6], [48, 104, 208, 440], 8), 'regnety_006': ([1, 3, 7, 4], [48, 112, 256, 608], 16), 'regnety_008': ([1, 3, 8, 2], [64, 128, 320, 768], 16), 'regnety_016': ([2, 6, 17, 2], [48, 120, 336, 888], 24), 'regnety_032': ([2, 5, 13, 1], [72, 216, 576, 1512], 24), 'regnety_040': ([2, 6, 12, 2], [128, 192, 512, 1088], 64), 'regnety_064': ([2, 7, 14, 2], [144, 288, 576, 1296], 72), 'regnety_080': ([2, 4, 10, 1], [168, 448, 896, 2016], 56), 'regnety_120': ([2, 5, 11, 1], [224, 448, 896, 2240], 112), 'regnety_160': ([2, 4, 11, 1], [224, 448, 1232, 3024], 112), 'regnety_320': ([2, 5, 12, 1], [232, 696, 1392, 3712], 232)}¶

- training: bool¶

- glasses.models.classification.regnet.RegNetEncoder = functools.partial(<class 'glasses.models.classification.resnet.ResNetEncoder'>, start_features=32, stem=functools.partial(<class 'glasses.nn.blocks.ConvBnAct'>, kernel_size=3, stride=2), downsample_first=True, block=<class 'glasses.models.classification.regnet.RegNetXBotteneckBlock'>)¶

RegNet’s Encoder is a variant of the ResNet encoder. The original stem is replaced by RegNetStem, the first layer also applies a stride=2 and the starting features are always set to 32.

- class glasses.models.classification.regnet.RegNetScaler[source]¶

Bases:

objectGenerates per stage widths and depths from RegNet parameters. Code borrowed from the original implementation.

- glasses.models.classification.regnet.RegNetStem = functools.partial(<class 'glasses.nn.blocks.ConvBnAct'>, kernel_size=3, stride=2)¶

RegNet’s Stem, just a Conv-Bn-Act with stride=2

- class glasses.models.classification.regnet.RegNetXBotteneckBlock(in_features: int, out_features: int, groups_width: int = 1, **kwargs)[source]¶

Bases:

glasses.models.classification.resnet.ResNetBottleneckBlockRegNet modified block from ResNetXt, bottleneck reduction (b in the paper) if fixed to 1.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- training: bool¶

- class glasses.models.classification.regnet.RegNetYBotteneckBlock(in_features: int, out_features: int, reduction: int = 4, **kwargs)[source]¶

Bases:

glasses.models.classification.regnet.RegNetXBotteneckBlockRegNetXBotteneckBlock with Squeeze and Exitation. Differently from SEResNet, The SE module is applied after the 3x3 conv.

Note

This block is wrong but it follows the official doc where the inner features of the SE module are in_features // reduction, a correct implementation of SE should have the inner features computed from self.features.

Initializes internal Module state, shared by both nn.Module and ScriptModule.

- training: bool¶